MimiCook: ユーザの状況に合わせた調理ナビゲーションシステム

MimiCook: A Cooking Assistant System with Situated Guidance

概要/Abstract

Referring to documents is common when making things, but there is a difficulty caused by the gap between a written description and the actual context of making. For example, when cooking following a recipe, people may lose their current position in the recipe, misunderstand the required amount of ingredients because of complicated measuring units, or skip steps by mistake. We address these problems by selecting cooking as our domain. Our proposed cooking support system, MimiCook, embodies a recipe in a real kitchen counter and directly navigates a user. The system consists of a computer, a depth camera, a projector, and a scaling device. It displays step-by-step instructions directly onto the utensils and ingredients, and controls the guidance display in accordance with the user's situations. The integrated scaling device also helps users to avoid mistakes with measuring units. Results of our user study shows participants found it easier to cook with the system and even subjects who had never cooked the assigned recipe did not make any mistakes.

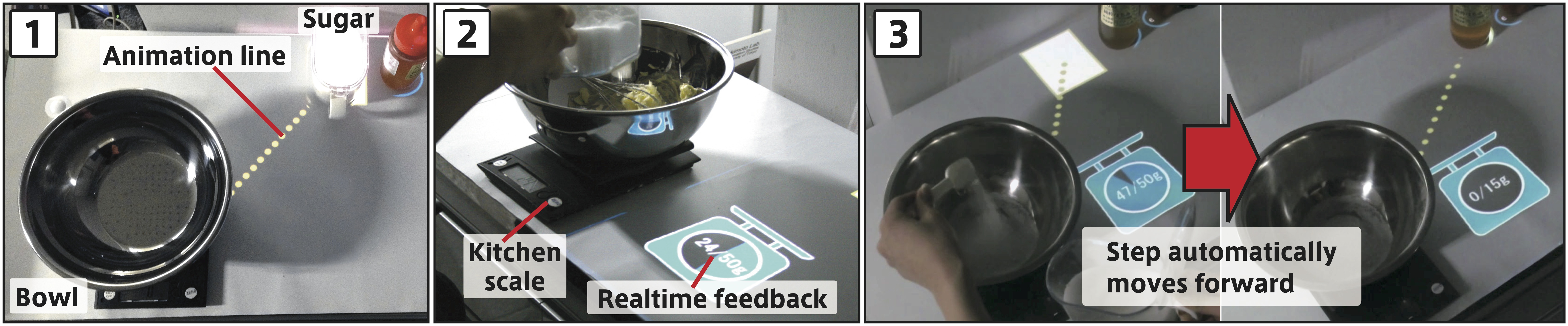

(1) Instructions are projected directly onto the kitchen counter and objects.

(2) Scaling device with Bluetooth provides real-time feedback.

(3) Step automatically moves forward in accordance with weights and objects’ movements.

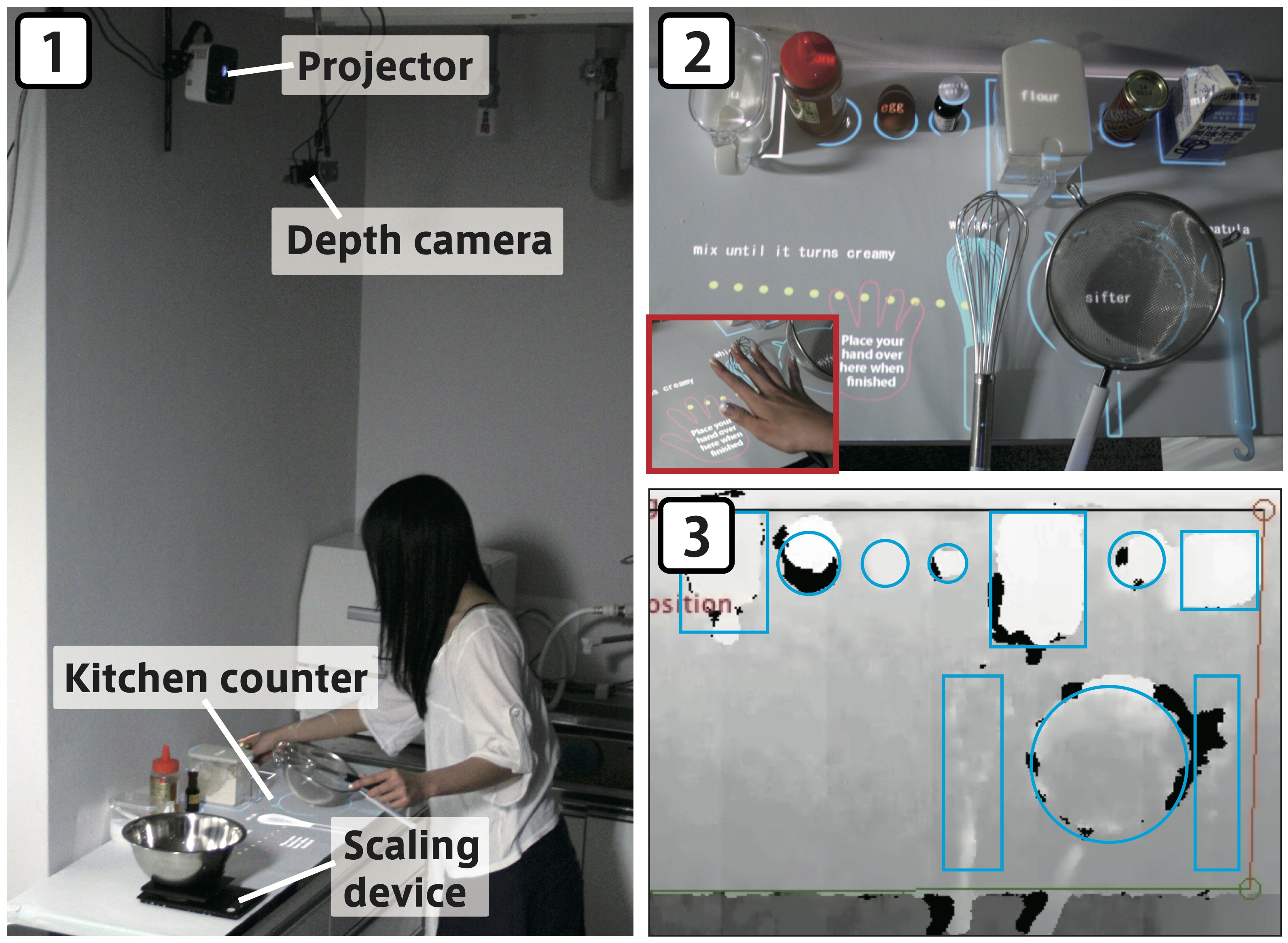

(1) System configuration of MimiCook.

(2) Real scene of kitchen counter.

(3) Corresponding depth image for recognition.

発表など/Publications

Ayaka Sato, Keita Watanabe, Jun Rekimoto, ShadowCooking: Situated Guidance for a Fluid Cooking Experience, 16th International Conference on Human-Computer Interaction (HCII2014). [pdf]Ayaka Sato, Keita Watanabe, Jun Rekimoto, MimiCook: A Cooking Assistant System with Situated Guidance, 8th International Conference on Tangible, Embedded and Embodied Interaction (TEI 2014). [pdf]